Explosive Pentagon Contracts

Why the Challenger explosion should be a warning about artificial intelligence

Happy Tuesday from New York City.

There’s a lot going on in the world, so it’s no surprise that the Anthropic and Pentagon conflict has moved to page 2 in most publications.

But not this one. Today we’re diving deeper into the messy dispute between the Pentagon and Anthropic. We’ll look at an example of a government contract gone wrong. And why we may be making the same mistakes again, but this time with a far more pervasive and potentially dangerous technology in artificial intelligence.

Behind the paywall I’m showing you how I’m thinking about this when drafting my thriller novel. One of the main characters is a Big Tech CEO who signs an AI deal with one administration only to be criminally investigated by the next White House. If you’re interested and/or want to support the work, you know what to do.

“Take off your engineering hats.”

That’s what engineers at the government contractor, Morton Thiokol, were told after they recommended delaying the launch of the Space Shuttle Challenger in 1986. Test data from as early as 1977 had revealed a potentially catastrophic flaw in a specific part of the rocket booster when operating in cold conditions.

It was an unusually cold day in Cape Canaveral on January 28, 1986.

Which is why, shortly before takeoff, Morton Thiokol engineers tried to stop the launch. Neither NASA nor Morton Thiokol management listened.

Nobody tells the government how to operate, right?

Many of us probably know what happened next. The O-ring seals failed in Challenger’s right solid rocket booster.

Space Shuttle Challenger exploded 73 seconds into its flight, killing all seven crew members — including a teacher — for everyone watching in person and on live television to witness.

I bring this up today because we have engineers once again in a dispute with the government over technology. But this time we aren’t debating O-rings in rocket boosters. The Pentagon is debating the safety limits of artificial intelligence with AI firms like Anthropic and OpenAI.

What legal lengths can the government take to deploy mass surveillance AI tools domestically? What degree of human oversight is required for the use of autonomous weapons?

As I’ve written before, current laws do not protect us from all AI use cases. They’re simply outdated. Even newer laws like the Patriot Act, which allow for surveillance in certain cases, do not anticipate a world of AI tools and LLMs.

Despite these legal shortcomings, the Defense Department (I’m not calling it the Department of War) has demanded that AI firms make their technology available for “any lawful use.”

As a lawyer I can tell you this is a massive legal trap.

These three words include not only the Patriot Act, but any Executive Order the administration decides to enforce (which as we just saw with tariffs are not always “lawful”). It also includes any policy change the Defense Department decides to enact.

What I’m really saying is that the Executive Branch gets to decide what is “lawful”, at least initially. It takes time for the courts to catch up and confirm whether they have the correct interpretation.

Just consider a brief history of intelligence law.

You may be persuaded that the government should be able to implement “any lawful use” for AI technology until you recall that practically every bad intelligence scandal dating back decades relies on some memo filled with legal gymnastics arguing it “complied with relevant law.”

Remember “enemy combatants”? FISA courts?

Remember Edward Snowden?

He exposed the secretive National Security Agency program called PRISM that was gathering bulk data on American citizens. This program was pulling information on Americans from tech companies like Microsoft, Google, and Apple. Other programs were collecting telephone records of Verizon customers on an “ongoing, daily” basis.

The government thought it was entirely legal in 2013.

So we would be foolish to believe that what the government thinks is “technically legal” should be the standard. Especially when heads of AI firms like Anthropic’s CEO Dario Amodei has no idea how certain autonomous AI tools will behave over certain capability thresholds.

Will they target the wrong person for execution?

Will they bomb the wrong building?

And can you imagine how efficient the NSA could have been back in 2013 if they had LLMs and AI tools powering their mass surveillance of Americans?

AI could easily enable the government to connect disparate pieces of information about people and build profiles in a way that wasn’t possible just a decade ago. Whether it’s pulling from different state and local databases and connecting that information with search and browsing history and federal data, the sky is the limit with current technology.

The law has not caught up to AI’s ability to conduct massive surveillance at scale.

But for every Dario Amodei who refuses to comply with “any lawful use”, there will always be someone willing to carry water for the government.

Enter OpenAI’s Sam Altman.

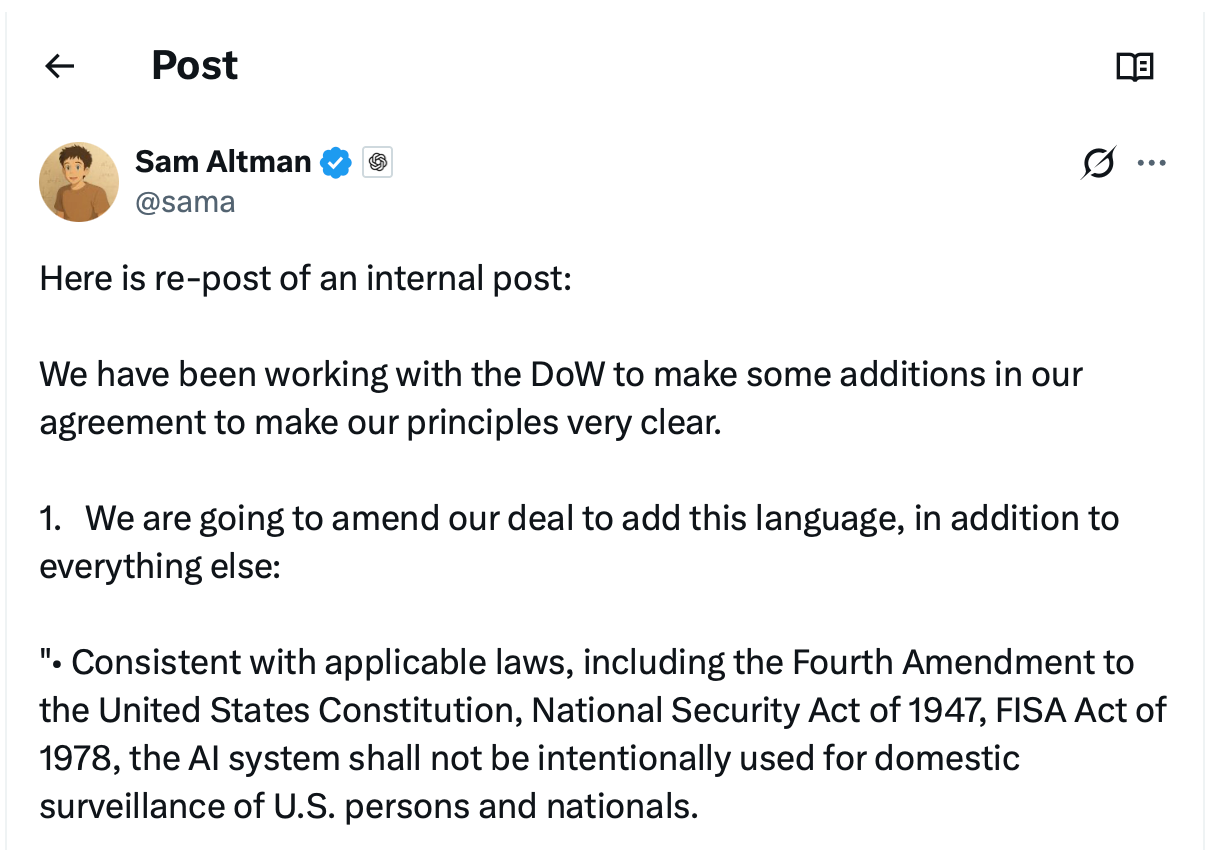

The above tweet is Altman quickly backtracking after rushing to replace Anthropic as the sole AI firm working with the government. He rushed to clarify that its AI tools “shall not be intentionally used for domestic surveillance of U.S. persons and nationals,” in line with relevant federal laws. He also noted that the amended contract prohibits “deliberate tracking, surveillance or monitoring of U.S. persons or nationals” through the use of commercially available information.

But for many people, it’s too little, too late.

Also, put on your lawyer cap with me. Notice the two bolded words in each of the clauses I highlighted?

They focus on state of mind and intent. So if OpenAI’s tools are used unintentionally for domestic surveillance, that arguably wouldn’t be covered. Or if they just happen to surveil U.S. persons or nationals, that could still be “lawful.”

Given the autonomy capabilities of newer AI systems, it’s not far-fetched or hyperbolic that these systems could perform activities they were not designed or intended to do (e.g., hallucinating a surveillance target into existence). Which is why even the updated language that Altman added to the contract is far less protective than the guarantees Amodei and Anthropic were seeking.

There’s also an argument that the government could search foreign intelligence databases using OpenAI’s technology for Americans on a large scale. Notice the clause that focuses on domestic surveillance.

So it’s no wonder that Anthropic’s generative AI app, Claude, has gained a high profile on Apple’s App Store, hitting the top of the charts for free apps. People voting with their attention and dollar.

The real issue, as I’ve explained in multiple videos, is that we lack any comprehensive AI safety standards. It’s on each and every tech firm from Anthropic to OpenAI to determine what safeguards it wants to fight for.

And that’s a dangerous position to put any for profit company in.

The government, and particularly Congress, should be leading the charge on ensuring the safety of AI for all Americans, especially in the context of mass surveillance and autonomous weapons.

Instead the burden is on private companies like Anthropic to negotiate with the federal government over basic safety terms. When they refused to agree, instead of just getting dropped as a contractor, Defense Secretary Pete Hegseth declared that no government contractor can now do business with Anthropic.

Even Trump’s AI visionary, Dean W. Ball, called this “corporate murder.”

Nvidia, Microsoft, and many other tech firms that Anthropic does business with are ALL government contractors. This is an insane overreach and overreaction to reasonable requests from a contractor negotiating with the government.

And it’s likely illegal. The definition of “supply chain risk” in the federal code is specific to “adversaries.” Anthropic is hardly Huawei.

Which brings us back to the warning — whatever the government says isn’t always legal.

If this happened in 1986 before the Challenger explosion, it would be like NASA not just telling contractors to “take off their engineering hats”, but burning the hat and the person wearing it.

Had Morton Thiokol been labeled a supply chain risk for refusing to launch the Challenger, it may have saved lives and averted catastrophe, but it would have also sent a chilling message to every other engineer in America — silence is the only way to stay in business.

It wouldn’t have just ended their contract. It would have made it illegal for every other aerospace firm to use their parts, effectively vaporizing the company for being too cautious.

This is the same intimidation tactic the Defense Department is trying to use today.

Comply or else.

It’s this same type of intimidation environment that could easily lead to the next Challenger disaster and explosive Pentagon contract.

The government is currently holding the gun to the head of AI safety and innovation. I couldn’t stop thinking about the person who pulls that trigger just to save themselves, so I wrote her into my thriller novel. Meet Olivia Ottinger…